This article shows our solution to solving boolean algebra equations.

The Idea

It was the beginning of our school year when we started learning boolean algebra at school. We were having trouble understanding all the rules of minimization but didn’t care about it at the time. When we got our exams we barely got low passing grades. We were pretty shocked since we never had troubles with computer science before that. 2 months later it was time to register for the national competition in software engineering. We decided to create a solution that would help students get a better understanding of how boolean algebra works and so we created Logicam.

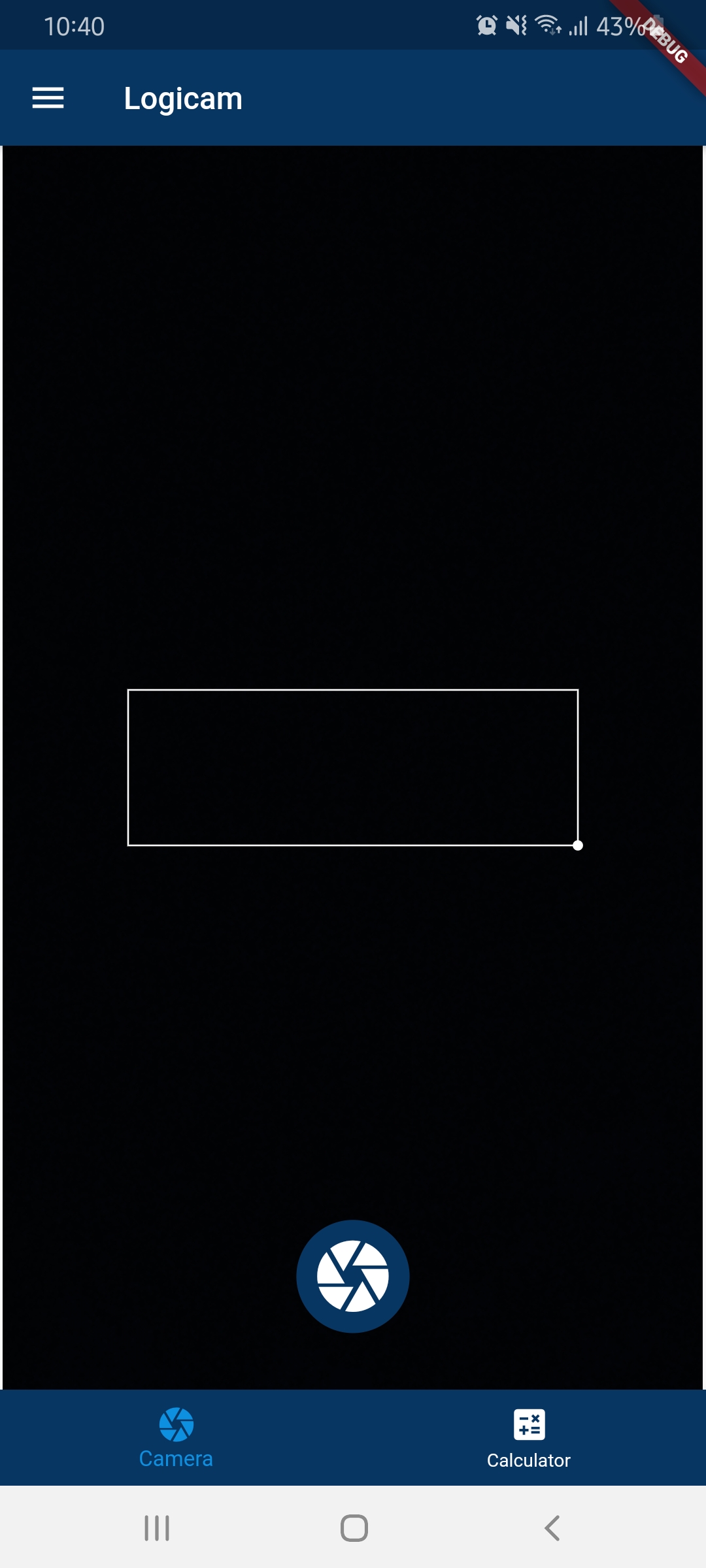

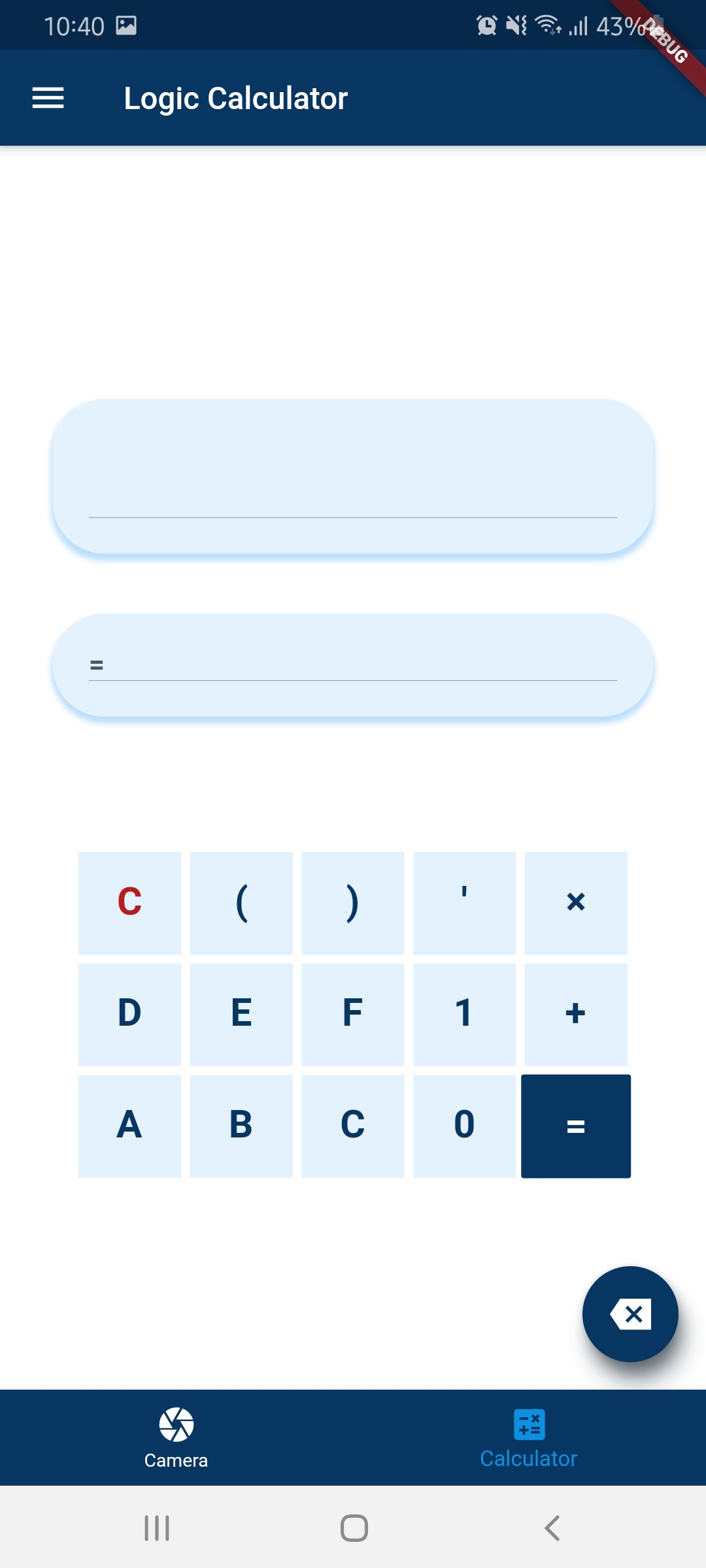

What is Logicam?

Logicam is a mobile app written in Flutter where users take pictures of the equation they want to minimize and the app automatically returns a solution. Pictures that users take are first processed by our custom-made OCR software that detects and recognizes all of the letters and symbols and so transfers the values to a script that solves the equation and returns the results. Those results are then displayed on the screen. Other than taking pictures of the equations, users can also type the equations by using the custom-made keyboard.

Technologies

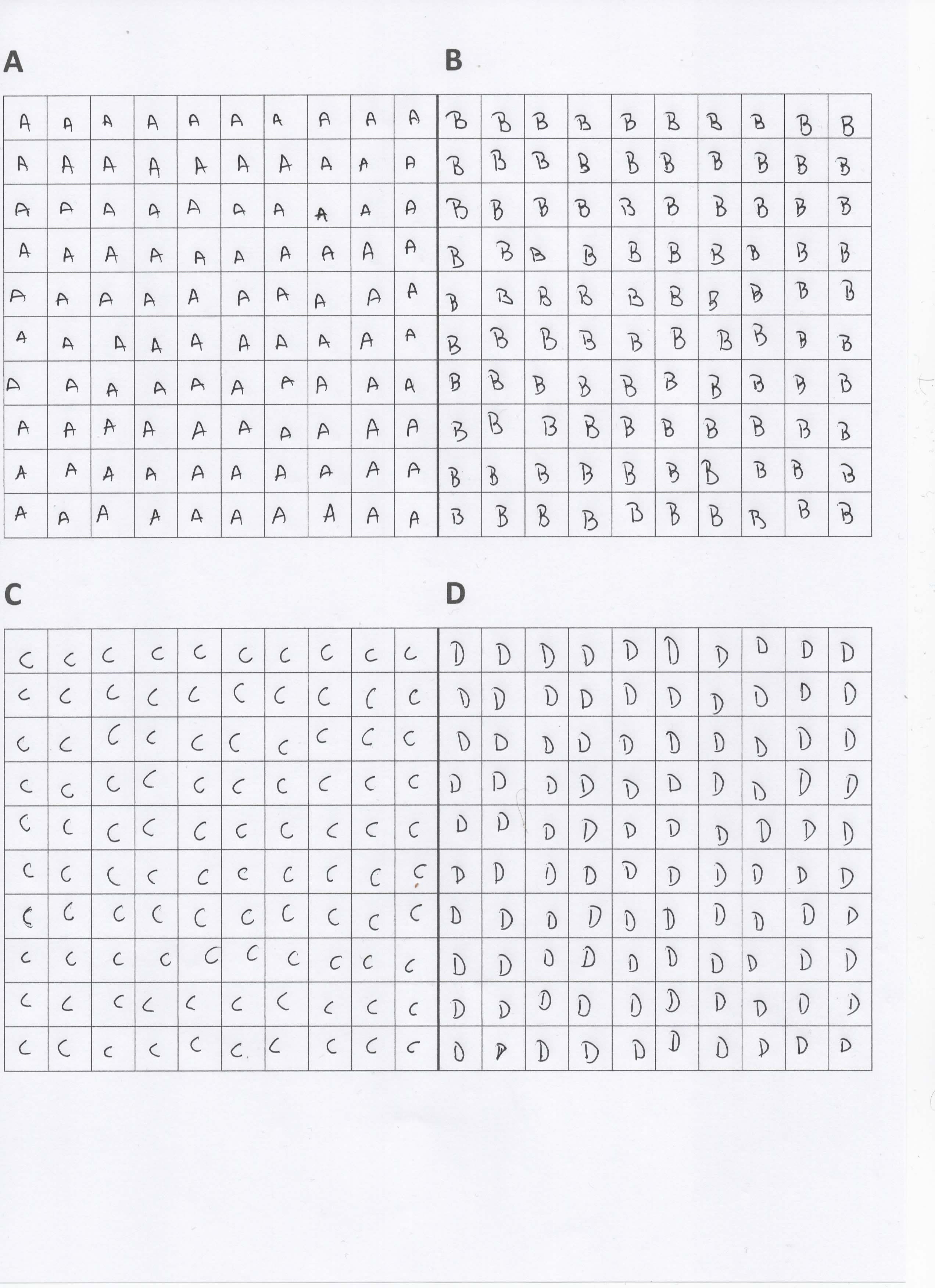

Data preparation

Collecting training data was one of our big challenges. We decided to create a table in Word and print it onto a paper, give them to our friends to write different symbols down. This way we made sure we got different handwriting for our AI to learn from.

Filled-out papers were scanned and each square from a timetable was turned into a single picture for the AI to learn from. For that, we created a script that uses OpenCV to detect different contours and extract every square as its image.

Frontend

Frontend of the app was written in flutter framework (dart programming language). Flutter is google’s open-source framework used for building beautiful, natively compiled, multi-platform applications. Today, flutter is one of the most popular frameworks for mobile development and one of the React Native’s biggest rivals. It provides many beautiful widgets and packages that allow for easier integration of many other open-source projects. This was our first time developing a mobile app and using flutter.

Optical Character Recognition

Since we have always wanted to create our own AI, we decided to create our own OCR (Optical Character Recognition). For building the artificial intelligence we used Tensorflow and Keras. The model was solving a simple classification problem. Before we could pass the images to Tensorflow, for training, we had to filter the images. It was important to get the filtering process right, so we would get the best results.

In the process we used Savitzky-Golay filter which you can find in SciPy library We created a convolutional neural network and trained it on our custom dataset.

The CNN uses 4 different layers as shown below:

#Reduces the input data to a single dimension instead of 2 dimensions

model.add(Flatten())

#Creates a neuron layer containing 256 neurons

model.add(Dense(256, activation = tf.nn.relu))

model.add(Dense(256, activation = tf.nn.relu))

#Creates a neuron layer containing 10 neurons (the amount of possible solutions)

model.add(Dense(10, activation = tf.nn.softmax))

Backend

For the backend of this app, we used a custom-made API written in Flask(Python) Flask is a micro-web framework written in python. After an image was taken, it’s sent to the backend running on our server which processes the image. The data goes through the OCR and then through our minimization script that returns the value of the minimized equation. The backend then sends that value back to the client where is displayed for the user.

You’ve read it all

Thanks for reading the article. If you have any questions, feel free to contact us.